The Next Generation Modelling Systems Programme

Designing modelling systems for architectures that don't yet exist.

Why we need next generation modelling systems

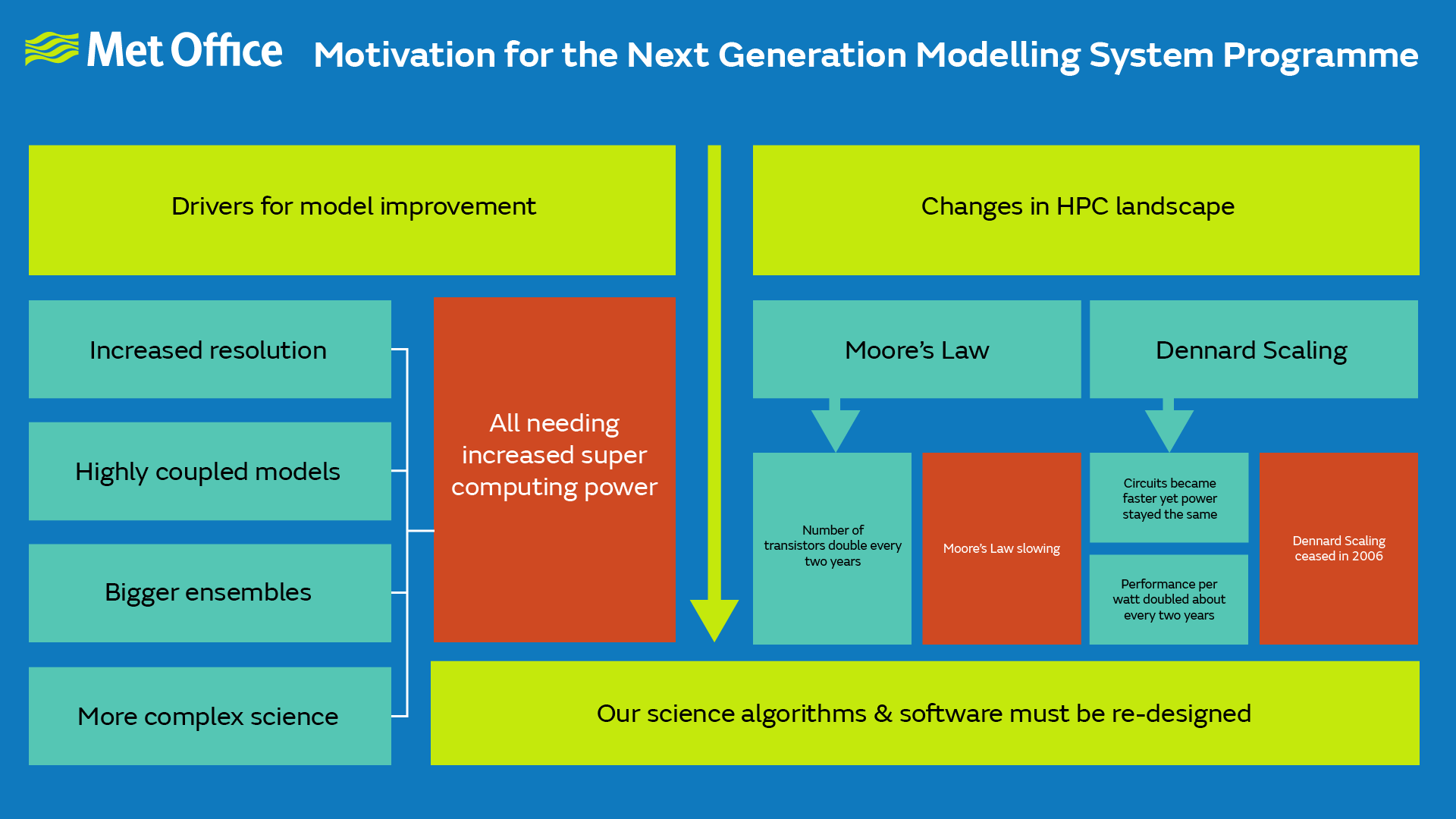

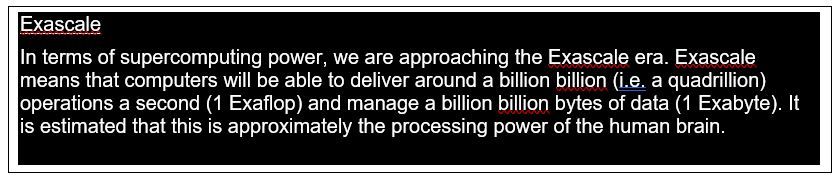

The power of supercomputers underpins our ability to deliver ever more accurate and useful weather forecasts and climate predictions. That power has increased exponentially since the origin of electronic computers in the 1950s. However, fundamental physical and engineering constraints mean that to continue that increase will require not only a huge further increase in the number of computing processors but also an explosion of new types of processors, and in particular supercomputer architectures that employ a mix of processor types (heterogeneous architectures).

To be able to exploit the power of these new architectures requires changes to the design of the weather and climate prediction systems – both the algorithms that emulate the physics of the atmosphere and oceans and the software infrastructures in which those algorithms are encoded.

Designing modelling systems for architectures that do not exist yet is clearly a challenge! It is essential therefore that the new systems are as flexible as possible and portable to different architectures without needing the software to be completely rewritten for each processor type. Fortunately help is at hand. Modern software design and practices give the exciting prospect of applying the principle of separation of concerns which separates hardware-specific aspects from the science-specific aspects (see later for a little more detail). Developing and implementing this approach is itself a big challenge though which requires co-design between computational scientists, software engineers, and weather and climate scientists. But the rewards will be rich as having more flexible and portable systems will make them more usable and more efficient, freeing up scientists from having to understand the complexities of specific computer architectures.

Aims of the programme

The Met Office has therefore established the Next Generation Modelling Systems Programme to reformulate and redesign the Met Office’s complete weather and climate research and operational/production systems, including oceans and the environment, to allow the Met Office and its partners to fully exploit future generations of supercomputer for the benefits of society. Recognising the importance of this endeavour, the Next Generation Modelling Systems Programme is one of the Met Office’s Corporate Strategic Actions; it is also one of the central themes of its Research and Innovation Strategy for the coming decade.

Programme timeline

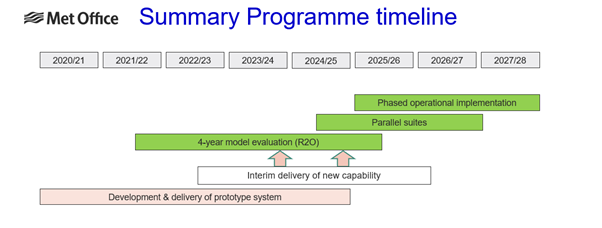

The programme is targeting implementation of the Next Generation Modelling Systems for operational Numerical Weather Prediction (NWP) towards the end of life of the Met Office’s new supercomputer ahead of porting to its replacement system, which is anticipated to be in 2027.

Improving the accuracy of weather forecasts and climate predictions

Accuracy of weather forecasts and climate predictions will be enabled by harnessing greater compute resources to increase the granularity with which the atmosphere and oceans are represented by the computer models, improve the accuracy of the representation of key physical processes, better represent the uncertainty in forecasts, and capture more extreme events further ahead in time. Additionally, by employing modern software practices in its redesign, the Next Generation Modelling Systems Programme will improve the usability, robustness, and flexibility of those modelling systems. This will improve the whole user experience and enable us to build new, exciting partnerships to deliver more science, more quickly.

Programme overview

The work of the Programme is organised under a series of projects which cover all aspects from the processing of observations, their ingestion into the modelling systems, through the various modelling systems needed for a complete Earth system simulation, to the visualisation and verification of the output of those systems. Specifically:

Next Generation Processing and Assimilation of Observation

Next-Generation Processing and Assimilation of Observations is a component of the Next Generation Modelling System programme and will deliver replacements for our current Observation Processing System (OPS) and Variational Assimilation System (VAR) for our global and regional weather forecasting capabilities. To achieve these goals, we have adopted the Joint Effort for Data assimilation Integration (JEDI) code framework developed by the Joint Centre for Satellite Data Assimilation (JCSDA), which offers improved modularity and flexibility using a generic object-oriented programming approach to create a standard interface between models, observation, and data assimilation algorithms. This framework will enable the Met Office to deliver the relevant capabilities and system suitable for both academic use and operational implementation. The project is divided into two themes:

-

JEDI-Based Observation Processing Application (JOPA)

This theme is dedicated to the development of a new observation processing system to select and quality control the various observations used during the assimilation cycle. -

JEDI Application for Data Assimilation (JADA)

This theme is dedicated to the development of a brand-new Data Assimilation system using JEDI as framework.

GungHo Atmospheric Science

The remit of the GungHo Atmospheric Science Project (GHASP) is to develop science code, within the LFRic infrastructure, that will form the basis of the atmospheric component of a global and regional forecast and climate model. This entails development of the GungHo dynamical core formulation and code implementation, as well as porting of the physical parametrisation schemes from the Unified Model (UM).

A key element of GungHo is the change from a latitude-longitude based array of points, with its clustering of points near the poles (the ‘pole problem’ or ‘polar singularity’), to a cubed-sphere based array of points which are more uniformly distributed.

The GHASP project delivered its final milestones in the Spring of 2023 and has now closed.

LFRic & LFRic Inputs

The LFRic project will deliver a comprehensive software infrastructure for running the next generation atmosphere model comprising of GungHo dynamics and UM physics running on a global cubed-sphere or regional latitude-longitude mesh, and also other components of the Met Office’s data assimilation system such as the linear model. These models need to be scalable and easily portable between current and future diverse supercomputer architectures. To this end, the design of LFRic imposes a separation of concerns between the science code, which implements the scientific algorithms and kernels, and the parallel code, which executes the science kernels using a range of different parallel strategies.

A tool called PSyclone is being developed to generate and optimise the parallel code for a range of different compute architectures in support of both compute performance and portability. The LFRic project works very closely with other projects that are developing or using LFRic-based models including Research to Operations which is generating the new operational forecasting suites and Processing and Assimilation of Observations which is building the data processing and assimilation systems. The LFRic Inputs project has been created to develop LFRic-based tools to manipulate the files generated and used by the LFRic atmosphere model as part of the flow of data through the overall modelling system.

Marine Systems

Since the current marine systems run at the Met Office do not suffer from the same polar singularity issues as the atmosphere model, the associated codes (including NEMO, NEMOVAR and WAVEWATCH III) will be maintained within the Next Generation Modelling Systems. However, the models still need to be prepared for the changes in future supercomputing architectures. Therefore, for each of the marine systems the project includes implementation of cost-effective improvements for Central Processing Unit (CPU)-based machines, adaptation of the codes to Graphics Processing Unit (GPU)-based machines, and development of a strategy for adaptation to other future architectures, exploring the same separation of concerns approach that is being used for the atmosphere model.

Next Generation Atmosphere Composition (NG-Composition)

This project will port the United Kingdom Chemistry and Aerosol (UKCA) sub-model of the UM to LFRic, with a particular focus on the gas-phase chemistry. Aerosol modelling capability is being ported as part of the Gung-ho Atmospheric Science project.

Next Generation Component Model Coupling

This project has developed an atmosphere-ocean-ice-land-hydrology model based on GHASP, the NEMO marine system and the TRIP river routing component. The different scientific components have been coupled together using the OASIS3-MCT coupler. The project delivered its final milestone in the Summer 2023 and is now closed.

Next Generation Verification System

This project will implement a replacement for the current Met Office Verification System (VER) used for both global and regional model atmosphere verification. The replacement will also provide verification for the ocean models.

The current VER system is over 20 years old and would not be able to produce verification on the cubed-sphere mesh of the Next Generation Modelling Systems’ atmosphere model without significant rewriting. The Model Evaluation Tools (MET) verification package together with the METplus python wrappers that were developed by the National Centre for Atmospheric Research (NCAR) Developmental Testbed Centre have been chosen as the replacement. MET is open source, widely used in research communities and is being implemented for operational use at NCEP.

Next Generation Research to Operations (NG-R2O)

This project is responsible for pulling together the work delivered by other Next Generation Modelling Systems projects and related programmes (such as those responsible for delivering our global and regional configurations) into coherent Numerical Weather Prediction (NWP) systems and preparing these systems for operational implementation on the Met Office’s next supercomputing system by 2027/28. The scope includes the global NWP system (which is a coupled atmosphere-ocean-land-sea ice system), the UK NWP system, regional NWP for other areas of the globe, and any other NWP models (including sub-km NWP) that may be implemented between now and 2027/28.

Next Generation Research to Climate Use

The Next Generation Modelling Systems: Research to Climate Use project will make, in collaboration with other Next Generation Modelling Systems projects, the necessary developments to ensure that climate needs are well understood to enable timely adoption of the new systems for key climate science and service activities. The project will also develop climate-specific workflows to exploit the new capabilities of next-generation supercomputing systems.

Next Generation Atmospheric-dispersion Modelling

This project will ensure that the Numerical Atmospheric-dispersion Modelling Environment (NAME) continues to be an effective Lagrangian-Eulerian dispersion model which can use NWP data from LFRic. It will ensure that NAME can continue to deliver operational services efficiently on future supercomputing architectures. Coupling NAME with LFRic so that the two models can run together at the same time will also be investigated.

Next Generation Training & Usability

The Next Generation Modelling Systems: Training and Usability project will support the smooth transition of UM users to the Next Generation Modelling Systems. It has two main aspects. The first aim is to develop training materials including instructor led training (face to face and virtual), self-service training and external training catalogue. The second aim is focused on User Experience (UX) and will propose recommendations for UX improvement.

Eventually the project will also support the transition of training material maintenance and course delivery to business as usual in a sustainable way.

Next Generation Integration

The Next Generation Integration project will deliver a holistic critical path analysis and plan for the delivery of the necessary technical capabilities to ensure that resources are focused upon the necessary tasks and that these tasks are achievable on a timeline to support/enable the delivery of the programme. It will also focus on delivery of technical capabilities that are needed to support multiple projects within the programme (such as model I/O, software stacks and cloud-based deployment of software) as well as the interface between the programme and its future downstream customers. This project is essential to provide a higher-level and forward-looking view of the route to operationalization of our Next Generation Modelling Systems. It will help to identify future risks and issues ahead of time.

Regional LFRic Model Evaluation

The Regional LFRic Model Evaluation project is responsible for delivering an LFRic-based third Regional Atmosphere and Land (RAL3) research configuration. This will be a well-tested and understood baseline, suitable for research purposes and future developments, for use by a variety of Met Office groups and collaborators including UM Partners and academic partners.

To achieve this aim, the project will develop LFRic-based regional research capabilities (e.g., workflows to run the model and evaluation and diagnostic tools). This will enable the scientific evaluation of RAL3 for LFRic, provide evidence of it performing sufficiently well to meet defined acceptance criteria, and identify aspects required for improvement through the subsequent RAL4 development cycle.

Global LFRic Model Evaluation

The goal of this project is to put together, evaluate and deliver a Global Coupled (GC) model configuration within the LFRic Framework. This configuration will then be the basis for further model development before the operational implementation of an LFRic-based Global Coupled weather forecasting system in 2026. This project will work on bringing together capability from other projects such as the new science code which forms the basis of the atmosphere component delivered by the GungHo Atmospheric Science project, the capability to couple the atmosphere-ocean-ice-land-hydrology components of the system delivered by the Next Generation Coupling project, the new next generation verification system, working closely with Research to Operations to integrate all the components into coherent systems. In addition to the technical integration work, this project will undertake high level and process-based evaluation activities with participation from a range of teams across the Met Office and Partner institutions.

LFRic Model Optimisation

The project is to improve the speed of execution of the LFRic atmospheric model to meet operational requirements. The project will measure how the model performance (speed of execution) scales with the number of processors or nodes of the supercomputer. PSyclone can already generate parallel code for CPU architectures. This project will extend PSyclone to further exploit parallelism for such architectures and improve the scaling. It will also develop the necessary software infrastructure that can execute on other processor architectures such as Graphics Processing Units (GPUs). Other optimisations will be developed during the project including both algorithmic and computational ones.

Next Generation Visualisation, Analysis & Tools

The Visualisation, Analysis and Tools project aims to advance and assist the scientific visualisation and model evaluation tools needed to support the Next Generation Modelling Systems by exploiting the Met Office led open-source Iris Python package. With most current tooling only able to handle data such as the UM’s latitude-longitude based mesh, new tooling is required to manage and exploit the cubed-sphere mesh of the Next Generation Modelling Systems’ atmosphere model. Iris is extended to support the analysis and visualisation of unstructured UGRID data, as output from the LFRic atmospheric model. The project delivered its final milestone in the Winter 2021 and is now closed.

Fab Build System

The Fab (link: https://github.com/metomi/fab) project set out to create an extract and build tool that is suitable for supporting the innovative technical design of LFRic. Using an accessible, flexible, and extensible Python interface, Fab facilitates both conventional and novel extract/build steps, including support for PSyclone. Additionally Fab provides an entry level ‘zero configuration’ mode to enable developers of smaller code bases to focus on high quality scientific and technical outcomes more efficiently.

The adoption of Fab for LFRic applications will facilitate retirement of incumbent solutions that are increasingly difficult to maintain and apply to the challenges presented by LFRic’s cutting edge technical design.

Fab is released with the open-source BSD-3 licence and hosted on GitHub to facilitate open engagement with the global community.

The FAB project delivered its final milestone in the Spring 2023 and is now closed.

Working in partnership

The Next Generation Modelling Systems Programme is large and complex. Its success depends on the efforts of many partners. Some of these are long-standing partners, such as operational and research centres based around the world in the UM Partnership. The Bureau of Meteorology (Australia), the National Institute of Water and Atmospheric research (New Zealand) and a US Air Force partner, Oak Ridge National Laboratory, have been contributing to the programme’s technical infrastructure around code generation, visualisation, and the porting of physics routines. Others are new, such as the Joint Center for Satellite Data Assimilation and the National Center for Atmospheric Research (both in the United States), who have led the development of the JEDI infrastructure and METplus respectively. And for some, such as the Science and Technology Facilities Council’s Hartree Centre, who have developed PSyclone, it has reinvigorated long-term collaborations.